Hi!

I'm a programmer with no whatsoever experience in electronics and so I thought... well, what could be wrong with learning electronics with Arduino?

So far everything fine, I also made some simple and cool projects, learning practice and theory. I bought a multimeter to do some tests.

Recently I tried the difference between LEDs in series and LEDs in parallel; I could get two LEDs in series, but not three LEDs in series. I cannot understand why, though.

From my understandings, Arduino's 5V pin which I'm using has a 200 mA limit. Well, if we count that the average red LED uses 20 mA, one could use more than three LEDs without any problem.

Obviously, I use a resistor, specifically a 220 Ω one, so almost 22 mA should get to a single LED (?).

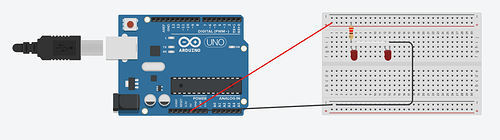

The problem is that, in simulations and in reality, this kind of circuit works fine (both LEDs turn on):

But this does not, because LEDs are three:

My first thought was about the voltage drop each LED has, so I used my multimeter to test (on the circuit which turns on, with two LEDs).

It seems that each LED has a voltage drop of ~1.95 V by testing with the multimeter. That's a lot.

What I noticed is that the first side of the resistor, which receives 5V, actually shows (almost) 5V on the multimeter, but the other side shows 3.9 V! (Almost) 1 V was lost with the resistor, it seems.

Is this supposed to be like this? Isn't 1 V too much for a 220 Ω resistor? Initially, I thought that resistors only reduced current, but I read that they also have a voltage drop too (in some way). Am I right?

So, testing with the multimeter, it seems that the circuit receives 5 V which then become 3.9 V with the resistor. The first LED receives that 3.9 V and then we go to the next, which receives almost 2 V because of the first's voltage drop.

I am doing all these measurements by connecting the multimeter's ground to Arduino's GND and the multimeter's mAVΩ to the resistor or LED's anode (depending on what I want to measure). Is that how it's supposed to be done?

So, what actually prevents me to have 3 LEDs in series? I think that what happens here is that the second LED, which receives 2 V, having a 1.95 V voltage drop, can't provide enough voltage to the third. If I'm right, probably the limit of LEDs that I can have in series is two, not because of current but because of tension.

I know probably those questions are already answered on the forum, but by searching today and yesterday I didn't find any clear explanation. Also, I had to ask about the multimeter - I'm not sure if I am using it correctly.

Thanks for the help and sorry for the long thread.