Hello everyone!

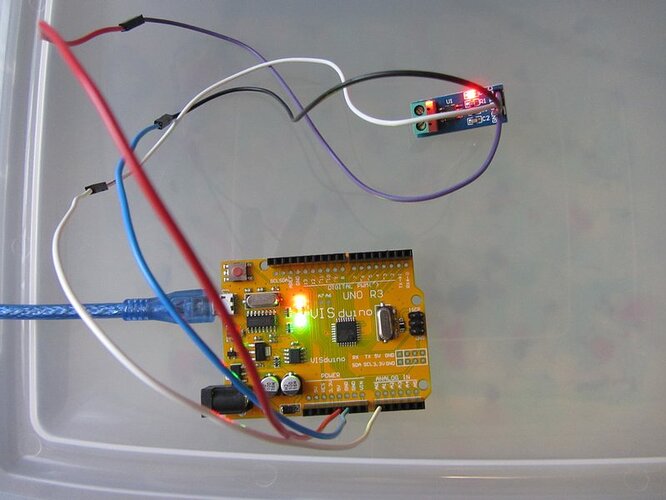

I'm trying to measure DC current with the ACS712 - 20A and it seems to work ok except for the zero current measurement. I need some help from someone who has experience with those sensors.

The code seems to be good as it does work and I do get the correct reading when the current is flowing. If the current is very small or actual zero I get wild fluctuations. I'm not talking regular noise which is to be expected, rather I'm talking about wild fluctuations.

With the current flowing I get 1A for 1A and 10A for 10A. That is good. When the load is disconnected the reading will jump all over the place by as much as 9A!! So, I might see readings like 0.1A, then -2.0A, then 0.0A, then 6.5A, then -9.1A. It's just wild and it really screws up the operation of my circuit triggering overcurrent or undercurrent alarms while the load and supply are actually disconnected! What's up it that? Any ideas?

When I disconnect the sensor from Arduino and just let the analog pin float I get similar wild fluctuations. I tried measuring the voltage on the output pin of the sensor and it is steady at 2.542V with 5.01V supply. Measured with good Fluke multimeter.

It seems to me as if there is not enough load on the analog input pin for the Arduino and I get random noise but then why does it work just fine when there is current flowing? I don't get it.

I guess I should mention that I have tried three different Arduino boards (2x Uno and 1x Mega) and two separate ACS712 sensors and I always get the same results.

The idea to measure the zero current was to establish the base sensor voltage to get accurate starting point. Then a small current is applied to the load (about 100mA) for 500ms to check if the load is still present and connected. At this point I absolutely cannot set the zero point and I cannot check the presence of the load. With no current and 100mA my readings are just crazy.

An example:

The code starts by checking the input voltage on analog in to establish sensor zero point. Supply and load are disconnected. The reading I get is... pretty much anything from -10A to 10A. Let's say that it gets 3.0A as an example here. Then about 100mA is applied for 500ms and the reading goes from -5A to 4A. As it is averaged as a result I might get the final result as -1A and that's it. It's a lot less than the expected 100mA and the circuit goes into error mode saying that the load is disconnected.

If the check current is set to about 1A it might work better but the initial zero point reading is all over the place so as a result the circuit will go into shutdown mode anyway.

I appreciate all your help.

Thanks!